Threads has recently introduced a significant update that aims to empower its users—Account Status, a feature previously exclusive to Instagram. This addition marks a transformative step for the Meta-owned platform, as it endeavors to enhance transparency regarding post visibility and moderation practices. In a climate where online expression often flirts with the boundaries of community standards, the rollout of this feature comes as a welcomed respite for many users who may feel at the mercy of opaque content moderation systems.

Understanding Post Moderation

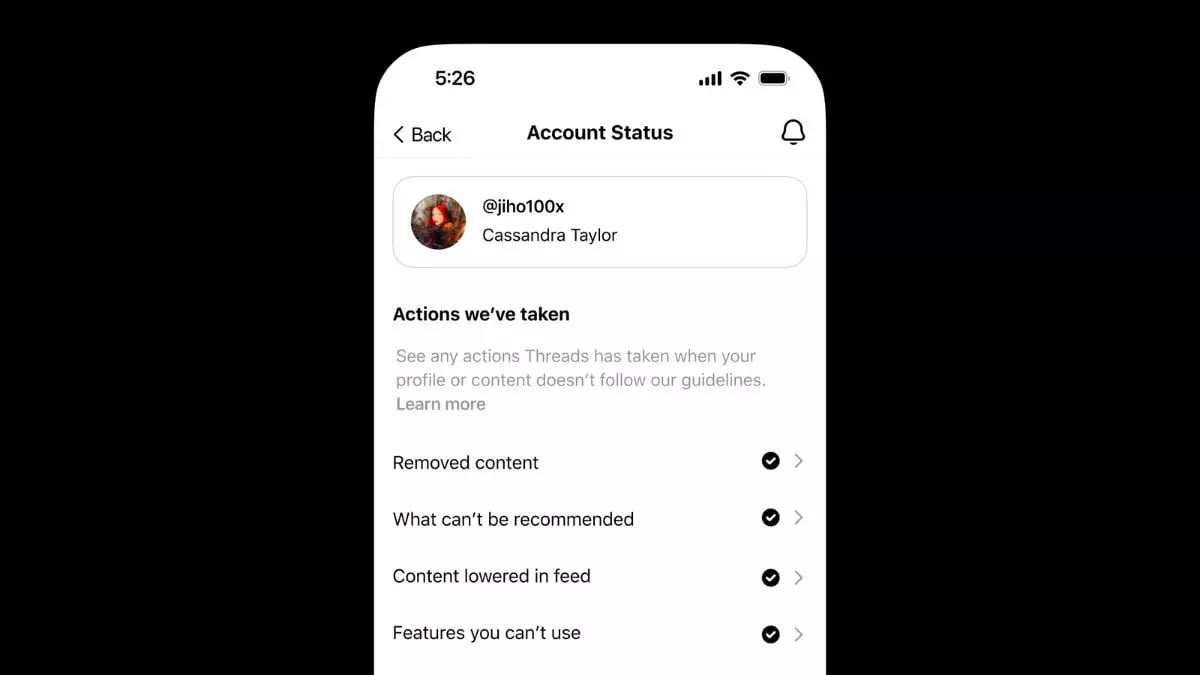

The core of the new Account Status feature lies in its ability to demystify the fate of a user’s posts. Now, users have access to crucial information about whether their posts have been removed, demoted, or flagged for violating the platform’s community standards. The importance of this cannot be overstated; users no longer have to guess or wonder about the status of their contributions. They can visit their settings to gain insights into actions taken against their content, thus fostering a sense of ownership and responsibility.

In a digital landscape rife with misinformation, such transparency is not just refreshing; it’s essential. By delineating actions such as “what can’t be recommended” or indicating lowered visibility, Threads enhances user agency, allowing individuals to understand not only what they can express but how that expression may be perceived within the community framework.

Opportunities for Appeal

Another noteworthy aspect of this update is the appeal process. Users dissatisfied with their post’s moderation have the opportunity to report the decision, pressing for a review that could potentially reinstate their content. This feature introduces an element of fairness and checks against arbitrary censorship, making it a critical tool for user advocacy. Imagine a user putting effort into a thoughtful post only to find it hidden without a clear reason; the ability to seek redress transforms frustration into a more constructive dialogue between the platform and its users.

Moreover, the notification system that alerts users once their appeal has been reviewed adds an additional layer of communication that many platforms often overlook. It’s not merely about maintaining standards; it’s about engaging users through an informed process and fostering trust.

Balancing Expression and Safety

However, the implementation of this feature is not without its challenges. While Threads emphasizes that expression is paramount, it walks a tightrope between upholding free speech and ensuring safety, authenticity, and dignity. The guidelines suggest a nuanced approach, considering the public interest aspect of potentially harmful content. It’s commendable that the platform is prepared to allow certain postings that might otherwise breach its guidelines if they hold significant newsworthiness—a gesture that acknowledges the complexities involved in content moderation.

But with this commitment to transparency and standards arises the question: Can Threads find a consistent balance? The digital ecosystem changes rapidly, and what may be acceptable content today could be contentious tomorrow. The introduction of context-based evaluations of ambiguous language is admirable, but it begs for a robust and consistently applied framework that doesn’t dilute the standards it seeks to uphold.

Threads’ new Account Status feature reflects a pivotal shift towards empowering its users with information and the ability to engage in the moderation process. While challenges remain, the proactive stance in moderating and transparently communicating with users is a powerful move towards creating a more accountable and user-friendly platform.

Leave a Reply