Nvidia, a titan in the AI and GPU landscape, has made a pivotal move by open-sourcing components of its Run:ai platform, marking a significant stride toward democratizing AI infrastructure. The introduction of the KAI Scheduler, a Kubernetes-native scheduling solution, embodies Nvidia’s strategic shift to prioritize both community collaboration and enterprise capability. Now available under the Apache 2.0 license, KAI Scheduler exemplifies Nvidia’s dedication to fostering an open-source environment that encourages contributions from developers, data scientists, and IT professionals. The implications of this initiative are profound, as it not only enhances Nvidia’s value proposition but also nurtures an ecosystem where innovation is driven by collective effort.

This decision underscores a broader trend in the tech industry, where openness and collaboration are increasingly recognized as catalysts for rapid advancement. The move to open-source the KAI Scheduler sends a powerful message: Nvidia is not just building tools for enterprise use; it is creating a community-oriented approach to AI infrastructure that has the potential to foster groundbreaking advancements in machine learning and beyond.

Tackling the Challenges of GPU Management

In the booming field of AI, managing GPU workloads presents several persistent challenges that can stymie progress. Traditional resource schedulers often fall short in environments where workloads are dynamic and demand for computing power fluctuates. Recognizing these hurdles, the KAI Scheduler has been meticulously crafted to address the inherent variability of AI workloads. Engineers might find themselves in situations where they start with a singular GPU for a straightforward interactive task, only to require multiple GPUs for intensive distributed training shortly thereafter. The KAI Scheduler is engineered to adapt to these ever-changing demands seamlessly.

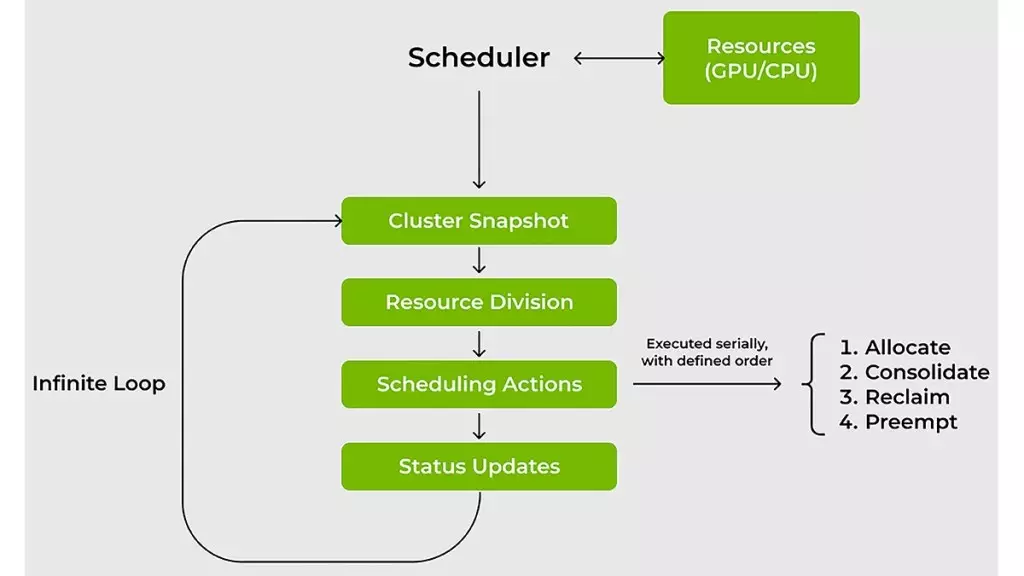

By leveraging real-time recalibration of fair-share values and dynamically adjusting quotas, the KAI Scheduler guarantees that resources are allocated efficiently, circumventing the pitfalls of manual oversight. This smart scheduling mechanism empowers teams to focus on their core objectives rather than getting bogged down in logistical challenges. Such an approach not only enhances productivity but also maximizes the utilization of expensive GPU resources, making it a game-changer for machine learning engineers.

Accelerating Workflows with Enhanced Scheduling Techniques

For machine learning engineers, the race against time is a familiar dilemma. Each moment in the wait for computational resources can be perceived as lost opportunity. The KAI Scheduler addresses this urgency through innovative scheduling strategies such as gang scheduling and GPU sharing. By implementing a hierarchical queuing system, it allows users to submit batches of jobs and step back while the scheduler manages task allocation based on resource availability and priority alignment. This optimized handling of tasks minimizes wait times and ensures that compute resources are employed effectively.

Furthermore, to combat underutilization—a common issue in shared clusters—the KAI Scheduler employs two strategies: bin-packing and workload spreading. Bin-packing maximizes compute utilization by efficiently filling available capacity with smaller tasks, while spreading ensures a balanced distribution of loads to prevent individual nodes from becoming bottlenecks. This thoughtful resource management approach eliminates the inefficiencies associated with often reckless GPU allocation practices seen in many organizations.

Streamlining Complexity through Integration

Navigating the landscape of AI tools and frameworks can be a daunting task for many teams. The sheer complexity of configurations required to integrate workloads with various platforms, such as Kubeflow or Ray, can stifle innovation and delay development cycles. KAI Scheduler mitigates this complexity by incorporating a built-in podgrouper, which simplifies the interaction between workloads and tools. By automatically detecting and connecting with numerous frameworks, the KAI Scheduler accelerates the development process, allowing teams to transition from idea to execution with unprecedented speed and efficiency.

This built-in automation not only reduces the burden of manual configuration but also encourages a more agile approach to AI development, where experimentation and iteration are prioritized. In a fast-paced environment where the ability to pivot quickly is paramount, such enhancements are invaluable.

Nvidia’s forward-thinking approach with the KAI Scheduler not only resolves the practical challenges associated with resource management but also serves as a blueprint for future innovations in the AI sphere. By prioritizing open source, efficient allocation, and simplified integration, Nvidia is setting a new standard for AI infrastructure that others in the industry will likely aspire to emulate.

Leave a Reply