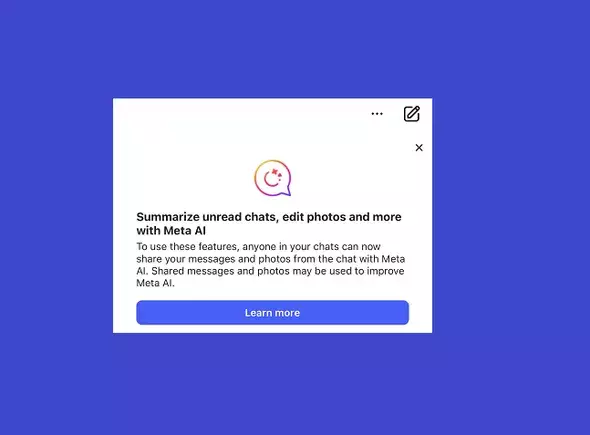

In recent days, Meta users have been greeted with a pop-up notification within their messaging apps. This message informs users about the integration of Meta’s Artificial Intelligence tools within chats on platforms like Facebook, Instagram, Messenger, and WhatsApp. While the functionality promises enhanced communication through AI-assisted responses, the implications for user privacy must be examined closely. This article delves into the potential ramifications, underlying concerns, and the importance of user awareness in navigating this new feature.

Meta’s aim is to create a seamless experience where users can directly engage with AI to resolve queries as they chat. By simply tagging @MetaAI within a conversation, users can receive real-time assistance without needing to switch to a dedicated AI chat. While this feature might initially seem convenient and innovative, it raises significant questions regarding user privacy and data handling.

With the introduction of this AI, any information shared in a chat could potentially be collected and utilized for AI training. Meta does imply that they take steps to anonymize data entries; however, the very possibility that personal messages, photos, or sensitive information could be included in the data set for AI learning is alarming. This cross-utilization of personal data can have serious consequences, especially for users who may not realize that their private conversations are being accessed for AI training purposes.

The functionality offered by Meta AI comes at a significant cost—the potential exposure of personal data. The convenience of having an AI assistant that interprets and responds to conversational context is undoubtedly appealing. However, it invites a crucial dialogue about what we are willing to compromise for convenience. Many users might assume that their chats are private, not fully comprehending that integrating AI could mean their shared content becomes fodder for machine learning processes.

Meta advises users against sharing sensitive information, such as passwords or specific private identifiers, reminding them to be mindful before seeking AI assistance within their conversations. This cautionary note raises further concerns about the platform’s responsibility in ensuring user safety. It shifts the onus of data protection onto the user, rather than establishing rigorous safeguards by default.

One of the intriguing aspects of this situation is the concept of user consent. Many users have already agreed to Meta’s extensive terms of service, often a lengthy document filled with legal jargon that few read in entirety. The inherent message is troubling: by using Meta’s applications, users have implicitly consented to their data being utilized in ways they may not fully understand.

The reality is that users cannot opt out of this data-sharing feature unless they take drastic measures—like avoiding Meta’s apps entirely. This resonates with a broader concern regarding the ethics of consent in digital spaces. Users should be proactively educated about their rights and the implications of any tools being introduced.

The introduction of Meta AI into private messaging is a technological leap that raises essential conversations about privacy, consent, and user agency. While the convenience offered by AI within chat platforms is undeniable, it must not supersede fundamental rights to privacy. Users are now tasked with navigating a newfound complexity in their communications: balancing the allure of technological advancement with an awareness of what their participation entails.

To protect one’s privacy, users should consider engaging with AI outside of their private chats, thereby preserving a degree of confidentiality in their communications. Ultimately, while technological innovations are beneficial, the onus to safeguard personal data lies in the hands of users and the platforms that serve them. Meta’s initiative offers a glimpse into the future of communication, but it also serves as a critical reminder that privacy is an ongoing negotiation in our digital lives.

Leave a Reply