In an era where digital interactions are woven into the fabric of everyday life, Platform giants like Meta are under increasing pressure to demonstrate genuine concern for their youngest users. While some critics argue that corporate interests often overshadow protective measures, Meta’s latest initiatives suggest a deliberate move to prioritize teen safety. These updates are not just superficial tweaks but represent a strategic overhaul that aims to create a safer, more aware environment. The introduction of real-time safety prompts and simplified blocking tools signals a recognition that the digital landscape requires proactive and accessible protective features, especially for impressionable users.

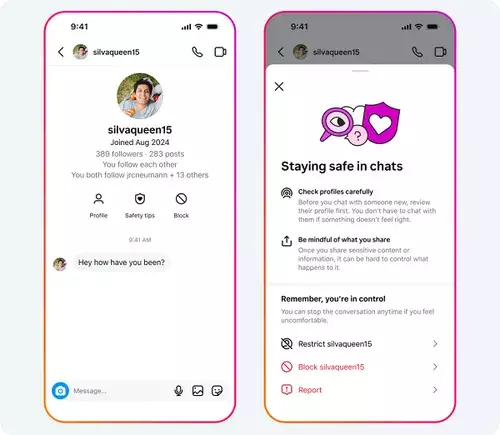

Furthermore, Meta’s effort to highlight account creation dates and offer immediate blocking options can be viewed as an essential step toward transparency and user empowerment. In a space rife with predators, scammers, and harmful content, these measures provide tools that teenagers can use instinctively, fostering a sense of agency. Yet, the question remains—will these features translate into meaningful change or merely serve as incremental fixes? Its success hinges on awareness, education, and the willingness of teenagers to utilize these tools consistently, something that requires ongoing effort beyond technological solutions alone.

Streamlining Enforcement and Detection of Harmful Behavior

The expanded protection measures reflect a deeper understanding of the complexities involved in online safety. Meta’s implementation of a combined block and report feature demonstrates a keen awareness of user friction: walking teens through multiple steps to report abuse can be discouraging. Making this process seamless aligns with broader behavioral insights that suggest ease increases compliance. Still, the effectiveness of such measures depends on the platform’s commitment to responsive moderation.

Meta’s acknowledgment of the persistent problem—removing hundreds of thousands of accounts involved in sexual misconduct directed at minors—is telling. It exposes the ongoing threat that adult-managed accounts pose, acting as gateways for exploitation. Despite these efforts, the sheer volume of malicious accounts indicates a cat-and-mouse game where predators adapt quickly. This raises concerns about the sufficiency of current detection systems, which, while extensive, can never be foolproof. The platform’s capacity to swiftly identify, flag, and remove offending accounts remains a critical battleground in safeguarding minors.

Moreover, the persistent sexualization of younger users underscores systemic societal issues that no technological fix alone can resolve. Meta’s ongoing removal of harmful accounts suggests a reactive approach rather than a preventative one, which highlights the need for broader regulatory measures and societal shifts alongside platform efforts.

Balancing Privacy, Awareness, and Control

The measures introduced—such as location data protections, nudity filters, and restrictions on messaging—signal an understanding that safety is multifaceted. Meta’s claim of effectiveness based on high engagement with protective features—like the nudity filter being active 99% of the time—indicates some success. Yet, these statistics also serve as an uncomfortable reminder that a significant portion of minors may still be exposed to nondisclosed risks, especially in environments where they might not yet fully grasp the boundaries of online interactions.

This dilemma feeds into a larger debate: Should platforms be more intrusive in monitoring and controlling adolescent behavior, or should they prioritize empowering teens with the knowledge and tools to self-regulate? Meta’s approach seems to favor the latter, emphasizing awareness campaigns and easier access to safety features. While commendable, it also risks positioning teens as primarily responsible for managing their safety—an unrealistic expectation given their age and maturity levels. Effective safety protocols must strike a balance that respects privacy and autonomy, while still providing adequate oversight and intervention capabilities.

The Broader Policy Landscape and Future Directions

Meta’s support for increasing the age of social media access in the EU is a notable strategic stance, aligning corporate interests with regulatory trends. Raising the minimum age to 16 or 15 signifies a recognition that younger users are particularly vulnerable and that social media introduction should be delayed until maturity is more developed. It also reflects an understanding that protective measures need societal and legislative reinforcement to be truly effective.

Yet, there’s an inherent tension here: While Meta advocates for higher age restrictions, the digital economy heavily depends on young users. Stricter age limits might reduce engagement, impact revenue, and challenge the very business models that propel these platforms forward. Nonetheless, they also position Meta as a responsible actor, willing to embrace regulation to a degree, potentially earning trust from parents and policymakers.

Overall, Meta’s ongoing efforts to enhance teen safety portray a complex balancing act—striving to protect the vulnerable while navigating commercial interests and societal pressures. The real test will be in how these strategies evolve, how transparent the platform remains about their efficacy, and whether they can foster a genuinely safer digital space for their youngest, most impressionable users.

Leave a Reply