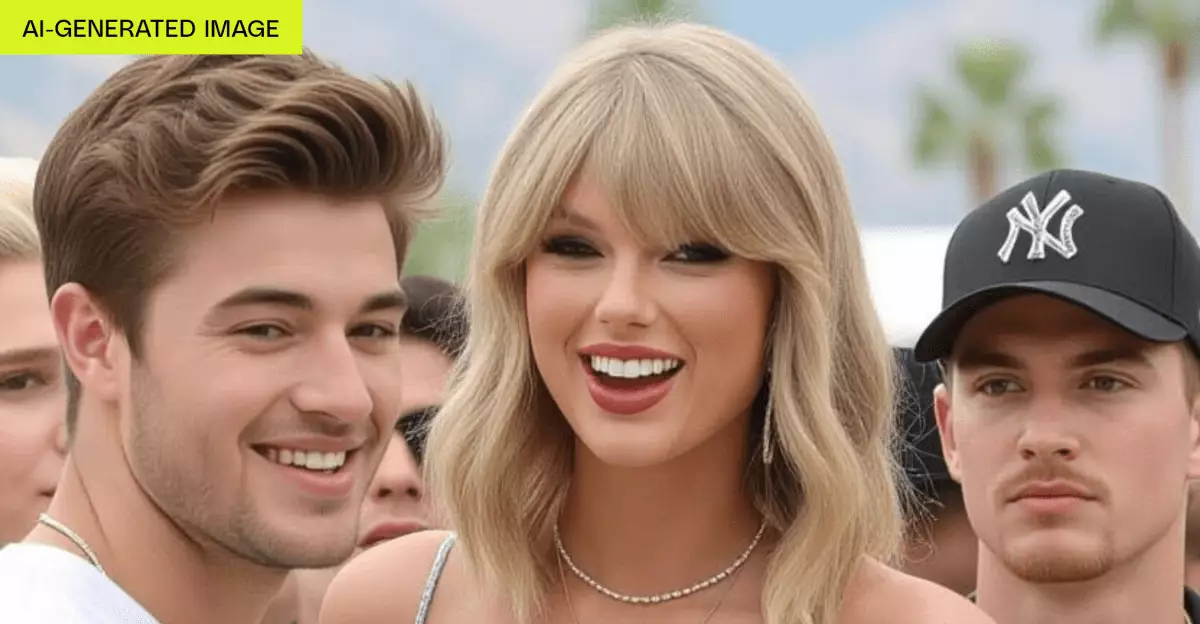

Artificial intelligence has revolutionized the way we generate visual content, from art to videos, offering unprecedented creative freedom. However, beneath this innovation lies a troubling lack of safeguards that can foster harm and exploitation. The recent surge of AI tools that allow users to create highly realistic images and videos, including deepfakes involving celebrities and minors, underscores a stark truth: without rigorous controls, these powerful technologies become playgrounds for abuse rather than instruments of artistry. AI developers often tout their platforms as safe or regulated, yet instances like Grok Imagine reveal a dangerous gap between policy and practice. The absence of effective safeguards is not just an oversight; it’s an open invitation for misuse by malicious actors, who can, with minimal effort, generate offensive, non-consensual, or even illegal content.

Superficial Constraints and the Exploitation of Loopholes

One of the most alarming aspects of many AI content generators is their superficial approach to regulation. Despite claiming to have policies against creating explicit or harmful material, these platforms often lack the technical mechanisms to enforce compliance. For example, Grok Imagine provides a “spicy” mode that explicitly produces suggestive videos, including nudity or partial nudity, often with recognizable celebrity likenesses. Yet it openly admits to generating images and videos that violate common standards of decency and, in some cases, legal protections. Such blatant disregard for ethical boundaries exemplifies a wider trend: policies are frequently worded in vague or non-binding language, or are simply bypassed due to weak verification measures. Age checks are rudimentary or nonexistent, easily bypassed by users with minimal technical know-how. As a result, minors can access and generate inappropriate content, risking psychological harm and normalization of exploitation.

The Ethical and Legal Implications of Unchecked AI Content

The lack of safeguards is not just an industry failure but a societal concern. Creating realistic fake images or videos—regardless of intent—can have devastating impacts. Celebrities have been targeted with deepfakes that tarnish reputations, spread misinformation, or incite harassment. The creation of non-consensual adult material involving familiar figures not only breaches privacy but also raises legal questions around harassment and defamation. The fact that some platforms openly encourage suggestive content, without any substantial verification or moderation, amplifies these risks. When companies prioritize profit or user satisfaction over ethical responsibility, they undermine the integrity of digital content and threaten public trust. As AI-generated media becomes indistinguishable from reality, the potential for harm multiplies exponentially, making robust safeguards not just desirable but imperative.

Why the Industry Must Embrace Responsible AI Development

It is troubling to observe how many AI developers treat safety measures as afterthoughts, opting instead to capitalize on the novelty and profitability of unrestricted content creation. Responsible AI development necessitates a proactive approach: implementing strict content filters, real-time moderation, comprehensive age verification, and clear legal policies. Without these, the technology is destined to be exploited, perpetuating cycles of harm and misinformation. The industry must prioritize ethical guidelines comparable to or surpassing existing laws, such as the Take It Down Act, which seeks to combat non-consensual deepfakes. Only through genuine regulation will AI tools serve as catalysts for positive creativity instead of conduits for exploitation. Developers should leverage transparency, accountability, and user education as cornerstones in building a safer digital ecosystem.

The AI content creation landscape is rapidly evolving, but our safeguards are not keeping pace. It’s incumbent upon developers, regulators, and society at large to demand strict accountability. Ignoring these dangers only fosters a digital climate where abuse and manipulation flourish unchecked. If we fail to implement substantive safeguards early on, we risk normalizing a world where individuals are constantly at the mercy of AI-driven misinformation and exploitation. The technology itself is not inherently malicious; it is the lax oversight and willingness to turn a blind eye to harm that threaten to turn it into a tool of destruction. It’s time for industry leaders to confront these issues head-on, recognize their ethical responsibilities, and build systems that prioritize human dignity over profit.

Leave a Reply