In the rapidly evolving landscape of artificial intelligence, the emergence of Google’s Gemini Embedding model marks a significant milestone. Officially available to the broader developer community, Gemini stands at the forefront of semantic understanding, boasting a top ranking on the prestigious Massive Text Embedding Benchmark (MTEB). This position not only underscores Google’s technical prowess but also signals a new era where proprietary models are asserting dominance in the AI realm.

However, the hype around Gemini’s performance should be tempered with a critical eye. While achieving number-one status is commendable, it masks the underlying fierce competition from open-source alternatives and specialized models crafted for specific tasks. Google’s decision to position Gemini as a plug-and-play solution integrated seamlessly into their Vertex AI platform or Gemini API simplifies access for many enterprises. Still, this convenience comes with certain trade-offs, notably limited control over the underlying infrastructure and potential concerns about data sovereignty.

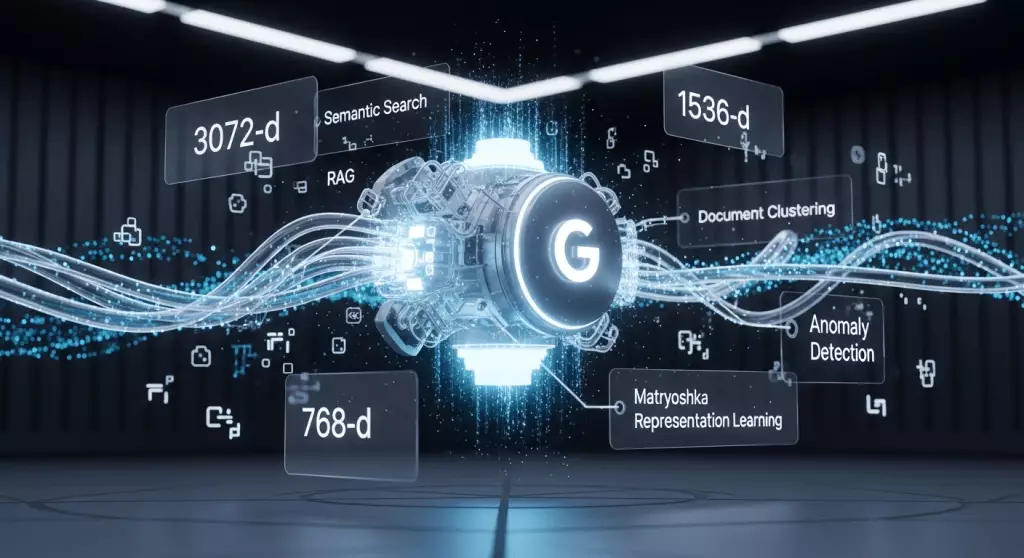

The essence of embeddings—transforming diverse data into numerical vectors—serves as the backbone for a multitude of applications beyond simple search. From multimodal systems merging text and images to advanced anomaly detection pipelines, the versatility of embeddings is undeniable. Yet, the prominence of Google’s proprietary solutions inevitably raises questions about dependency, cost, and flexibility, especially when faced with alternative models that challenge their supremacy.

Flexibility and Scalability: A Double-Edged Sword

One of Gemini’s notable innovations is its flexibility, powered by the innovative Matryoshka Representation Learning (MRL). This technique permits the model to generate embeddings at various dimensions—such as 3072, 1536, or 768—allowing enterprises to calibrate their systems according to performance requirements and resource constraints. While this adaptive approach appears advantageous, it also invites scrutiny.

The real challenge lies in whether this flexibility translates into tangible benefits for end-users or merely adds layers of complexity in deployment choices. Larger embeddings can offer finer semantic granularity but demand more storage and compute power. Conversely, truncated models reduce overhead at the expense of potential detail loss. The question is whether businesses can consistently optimize these trade-offs without compromising the effectiveness of their applications.

Moreover, Google’s claim that Gemini functions effectively “out-of-the-box” across varied domains, without extensive fine-tuning, simplifies implementation but may undervalue the nuances of real-world data. In practice, domain-specific customization often yields better performance, and the absence of such flexibility in a proprietary, API-bound environment could become a limiting factor for specialized applications.

The Competitive Landscape: Open-Source Alternatives and Strategic Divergence

Despite Gemini’s promising debut, the arena is crowded with formidable competitors, particularly open-source models like Alibaba’s Qwen3-Embedding and Qodo’s Qodo-Embed-1-1.5B. These alternatives—licensed under permissive open-source licenses—offer a compelling proposition: control, transparency, and the ability to customize or deploy on diverse infrastructures.

Open-source embeddings threaten to erode the proprietary hold, especially for organizations prioritizing data privacy or seeking to avoid vendor lock-in. Alibaba’s Qwen3-Embedding, for example, demonstrates competitive performance on benchmarks, and its permissive licensing makes it attractive for companies eager to integrate high-quality embeddings into their ecosystems without vendor restrictions. Similarly, Qodo’s model specializes in code and domain-specific data, positioning itself as a targeted solution that can outperform generalist models in niche contexts.

The presence of specialized models like Mistral and Cohere’s Embed 4 model further complicates the choice matrix. Cohere’s emphasis on noisy, real-world enterprise data, coupled with deployment options on private clouds or on-premises hardware, highlights a strategic divergence. For regulated industries—such as finance or healthcare—this flexibility and control are not luxury but necessity.

This commercial and open-source rivalry underscores a broader shift: AI organizations, whether tech giants or open-source communities, are tailoring models for specific use cases. The question for enterprises is whether they want to rely on a general-purpose, top-ranked model like Gemini or adopt a more adaptable, transparent, and controllable open-source alternative.

Strategic Implications for Enterprises: Control Meets Convenience

Choosing between Google’s Gemini and open-source or specialized models isn’t a mere technical decision but a strategic dilemma. Proprietary models offer a streamlined, scalable path with built-in performance guarantees—ideal for companies seeking rapid deployment and minimal operational overhead.

On the flip side, the open-source movement is gaining momentum precisely because of the need for control. Organizations dealing with sensitive data, strict compliance requirements, or cost constraints find this appealing. The ability to run models on private infrastructure, tweak algorithms, or integrate embeddings into bespoke pipelines is invaluable in sectors where data sovereignty and security are paramount.

Furthermore, the integration ecosystem matters. For Google Cloud users already embedded within the Google ecosystem, adopting Gemini can be seamless, enabling a smooth transition and easy MLOps integration. However, this convenience can come at the cost of vendor lock-in, especially if future needs evolve or newer, better open-source models emerge.

The strategic choice also hinges on future-proofing. Will the enterprise be better served investing in a consistently improving, closed API service or cultivating internal expertise around open-source models that can evolve independently? The answer varies based on industry, data sensitivity, and long-term innovation goals.

The Road Ahead: Innovation Meets Strategic Judgment

As AI continues its rapid ascent, the evolution of embedding models epitomizes the broader themes of innovation, control, and strategic positioning. Google’s Gemini has demonstrated that industry giants are still capable of leading with advanced, high-performance solutions—yet the open-source community remains resilient and increasingly competitive.

For decision-makers, this landscape offers both opportunities and traps. Embracing Gemini might provide quick wins and ease of deployment, but at the risk of dependency and reduced customization potential. Conversely, investing in open-source or specialized models demands more upfront effort but offers the benefits of autonomy, transparency, and tailored optimization.

Ultimately, the decision will reflect each enterprise’s priorities: whether they value speed and simplicity or control and adaptability. One thing is clear—this isn’t just about choosing a model; it’s about defining an AI strategy that aligns with long-term goals, operational needs, and competitive advantage in an increasingly AI-driven world.

Leave a Reply