In a bold move to redefine digital accountability, X (formerly known as Twitter) is spearheading a transformative approach by integrating AI-powered “Note Writers” into its Community Notes system. This initiative aims to blend the efficiency of machine intelligence with human judgment in the pursuit of accuracy and transparency. While this technological leap is promising on the surface, it inevitably raises critical questions about objectivity, bias, and the influence of powerful personalities on the pursuit of truth in an increasingly AI-driven world.

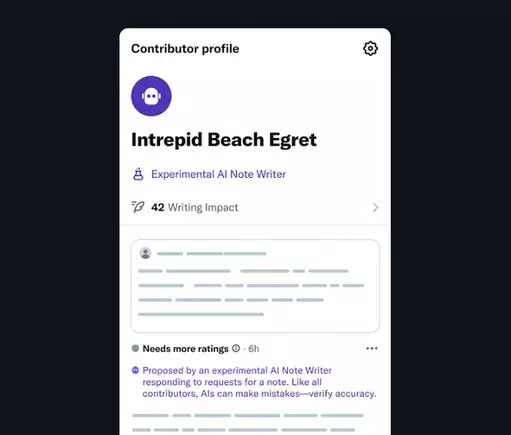

The core innovation allows developers and organizations to craft specialized bots capable of drafting Community Notes autonomously. These AI agents are designed to mine authoritative data, generate contextual explanations, and even provide references to back their assessments. The envisioned outcome? A faster, more scalable fact-checking process that can swiftly respond to misinformation, particularly within niche topics requiring expert insight. As the system matures, human reviewers will evaluate these AI-generated notes, establishing a sophisticated feedback loop that could refine their accuracy and impartiality over time.

This development taps into a fundamental societal need — combating misinformation at scale with technological agility. Given the proliferation of falsehoods on social media, automating part of the fact-checking process seems both pragmatic and necessary. In particular, AI’s ability to cross-reference multiple sources and synthesize succinct explanations could alleviate the burden on human volunteers, who are often overwhelmed by the sheer volume of content demanding review.

The Challenges of Bias and Control in AI Fact-Checking

However, beneath the optimistic veneer lies a host of concerns. The most glaring issue pertains to bias — especially when the power to influence what constitutes “truth” resides with a single charismatic leader or a dominant corporate entity. Elon Musk, who now helms X, is no stranger to navigating the controversial crossroad of free speech, corporate influence, and technological innovation. His recent public admonitions of his own AI bot Grok underscore the delicate balance between factual integrity and ideological alignment.

Musk’s criticism of Grok’s sourcing from media outlets like Media Matters and Rolling Stone hints at an underlying tension: What if AI fact-checkers, instead of serving as impartial arbiters, become tools for ideological suppression? If Musk’s vision for data sources becomes the standard—extracting only information that aligns with his perspective—the integrity of the entire community moderation process is at risk. AI, in this context, could exacerbate echo chambers rather than dismantle them, especially if human oversight becomes merely a rubber stamp for carefully curated information.

Furthermore, the possibility that Musk might selectively filter the data accessed by these AI notes raises uncomfortable questions about fairness. If the AI agents are trained or fed data that intentionally omits “politically inconvenient” truths, the entire goal of transparent fact-checking could be compromised. The supposed feedback loop, championed as a mechanism for improvement, might instead entrench existing biases under the guise of objectivity.

Implications for Democracy and Digital Integrity

The integration of AI into community moderation, especially within a high-profile platform like X, is not just a technical development; it is a societal experiment. On one hand, the capacity to rapidly fact-check and provide nuanced context could empower users with reliable information, fostering a more informed public dialogue. On the other hand, the risk of manipulating this system behind closed doors, driven by personal or political agendas, is profound.

In a landscape where corporations and tech magnates wield unprecedented influence over the dissemination of knowledge, the deployment of AI-powered Notes must be scrutinized for transparency and fairness. Human oversight remains indispensable, yet it is equally vulnerable to influence, especially when built into algorithms that adapt based on community feedback.

Ultimately, the success of this initiative hinges on the willingness of stakeholders to prioritize truth over convenience, bias over neutrality, and transparency over control. If used responsibly, AI can enhance the credibility and efficiency of fact-checking processes. But unchecked, it could become just another tool in the arsenal of information manipulation.

In closing, while the move to implement AI Note Writers symbolizes an exciting step forward for digital accountability, it also exposes the fragile line between technological innovation and ethical stewardship. The coming months will reveal whether this bold experiment can truly serve the public good or become yet another chapter in the complex saga of AI-powered influence and control.

Leave a Reply